GKE Clusters

Google Kubernetes Engine (GKE) provides a managed environment for deploying, managing, and scaling containerized applications using GCP.

GKE Prime

In GKE, a cluster consists of at least one control plane and multiple worker machines called nodes.

Cluster Modes

GKE clusters have two modes of operation:

- Autopilot: GCP manages the entire cluster and node infrastructure for you.

- Standard: GCP provides you with node configuration flexibility and full control over managing your clusters and node infrastructure.

An in depth comparison between autopilot and standard is provided on the following link:

Cluster availability type

- Zonal clusters have a single control plane in a single zone.

- A single-zone cluster has a single control plane running in one zone. This control plane manages workloads on nodes running in the same zone

- A multi-zonal cluster has a single replica of the control plane running in a single zone, and has nodes running in multiple zones.

- A regional cluster runs multiple replicas of the control plane in multiple zones within a given region. Nodes in a regional cluster can run in multiple zones or a single zone depending on the configured node locations.

Use regional clusters to run your production workloads, as they offer higher availability than zonal clusters.

Control Plane

The control plane runs the control plane processes, including the Kubernetes API server, scheduler, and core resource controllers.

You interact with the cluster through Kubernetes API calls, and the control plane runs the API Server process to handle those requests.

The API server process is the hub for all communication for the cluster. All internal cluster processes (such as the cluster nodes, system and components, application controllers) act as clients of the API server; the API server is the single “source of truth” for the entire cluster.

The control plane schedules workloads, like containerized applications, and manages the workloads’ lifecycle, scaling, and upgrades. The control plane also manages network and storage resources for those workloads.

The control plane has a public or private IP address based on the type of cluster, version, and creation date.

Nodes

A cluster has one or more nodes, which are the worker machines that run containerized applications.

A node runs the runtime and the Kubernetes node agent (kubelet), which communicates with the control plane and is responsible for starting and running containers scheduled on the node:

- Each node is of a standard Compute Engine. The default type is e2-medium (configurable)

- Each node runs a specialized OS image (configurable).

- Each node has an IP address assigned from the cluster’s VPC network.

- Each node has a pool of IP addresses that GKE assigns Pods running on that node.

PODs

A Pod is the most basic unit within a Kubernetes cluster. A Pod runs one or more containers. Pods run on nodes.

Each Pod has a single IP address assigned from the Pod CIDR range of its node. This IP address is shared by all containers running within the Pod, and connects them to other Pods running in the cluster.

Service

Kubernetes uses labels to group multiple related Pods into a logical unit called a Service.

Each Service has an IP address, called the ClusterIP, assigned from the cluster’s VPC network.

Pods do not expose an external IP address by default. GKE provides three different types of load balancers to control ingress :

- External load balancers

- Internal load balancers

- HTTP(S) load balancers are specialized external load balancers used for HTTP(S) traffic. They use an Ingress resource rather than a forwarding rule to route traffic to a Kubernetes node.

GKE Networking

Services, Pods, containers, and nodes communicate using IP addresses and ports:

- vpc-native clusters uses alias IPs to route traffic from one POD to another

- routes-based cluster routes traffic from one POD to another using the vpc route table

- node subnet: primary IP range for all the nodes in a cluster.

- control plane IP address range: an RFC 1918 /28 subnet that shouldn’t overlap any other CIDR in the VPC network.

- Pod IP address range: IP range for all Pods in your cluster (cluster CIDR).

- service IP address range: the IP address range for all services in your cluster (services CIDR).

Recommendations

- Plan the required IP address.

- Reserve enough IP address space for cluster autoscaler

- Avoid overlaps with IP addresses used in other environments.

- Create a load balancer subnet.

- Use non-RFC 1918 space if needed.

- Use custom subnet mode.

- Plan Pod density per node.

Implied Firewall rules

Every VPC network has two implied IPv4 firewall rules:

- Implied IPv4 allow egress rule. An egress rule whose action is

allow, destination is0.0.0.0/0, and priority is the lowest possible (65535) lets any instance send traffic to any destination. - Implied IPv4 deny ingress rule. An ingress rule whose action is

deny, source is0.0.0.0/0, and priority is the lowest possible (65535) protects all instances by blocking incoming connections to them.

Implied rules are not shown in the Cloud console.

GKE Firewall rules

GKE creates firewall rules automatically when creating the following resources:

- GKE clusters

- GKE Services

- GKE Ingresses

The priority for all automatically created firewall rules is 1000.

Recommendations

- Choose a private cluster type

- Minimize the cluster control plane exposure

- Authorize access to the control plane

- Restrict cluster traffic using network policies.

- Enable Google Cloud Armor security policies for Ingress.

GKE Pricing

The cluster management fee of $0.10 per cluster per hour (charged in 1 second increments) applies to all GKE clusters.

Aviatrix Overview

Aviatrix is a cloud network platform that brings multi-cloud networking, security, and operational visibility capabilities that go beyond what any cloud service provider offers. Aviatrix software leverages AWS, Azure, GCP and Oracle Cloud APIs to interact with and directly program native cloud networking constructs, abstracting the unique complexities of each cloud to form one network data plane, and adds advanced networking, security and operational features enterprises require.

FireNet

Aviatrix Transit FireNet allows the deployment of 3rd party firewalls onto the Aviatrix transit architecture.

Transit FireNet works the same way as the Firewall Network where traffic in and out of the specified Spoke is forwarded to the firewall instances for inspection or policy application.

Aviatrix Configuration

FireNet deployment is covered on the following blogs:

Deploying an Aviatrix FireNet on GCP with CheckPoint

Deploying an Aviatrix FireNet on GCP with Fortinet FortiGate

“Terraforming” an Aviatrix FireNet on GCP with PANs

The subnet has a primary range for the nodes of the cluster and it should exist prior to cluster creation.

The Pod and service IP address ranges are represented as distinct secondary ranges of your subnet.

VPC (gcp-spoke100-us-east4) and subnet (100.64.0.0/21) created for the GKE deployment:

The following table lists the size of the CIDR block and the corresponding number of available IP addresses that Kubernetes assigns to nodes based on the maximum Pods per node:

GKE Deployment

Enable the Google Kubernetes Engine API:

Standard x Autopilot:

Cluster basics:

- I’m creating a regional cluster: nodes are created on all available zones.

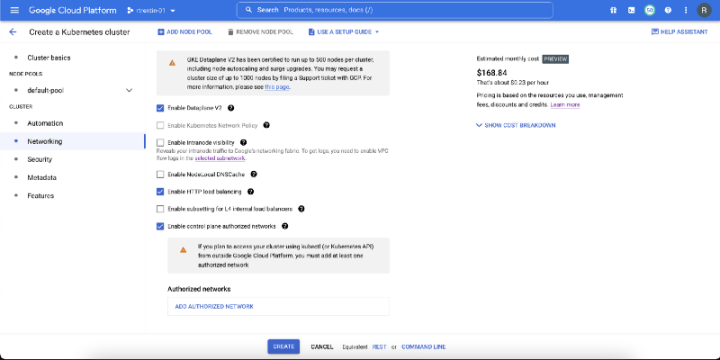

Networking:

- Private x public cluster: private cluster nodes have internal IP addresses. Internal IP addresses for nodes come from the primary IP address range of the subnet. Pod IP addresses and Service IP addresses come from two subnet secondary IP address ranges of that same subnet.

- Private clusters have the option to lock down external access to the cluster control plane. There is still an external IP address used by Google for cluster management purposes, but the IP address is not accessible to anyone.

- Private clusters also have the option to enable control plane global access, that uses private endpoints to grant secure access to the cluster.

- Disable Default SNAT: supports the use Privately Used Public IPs ranges

- Cluster default pod address range: All pods in the cluster are assigned an IP address from this range.

Supported size is between /9 and /21 bits

- Service Address Range: Cluster services will be assigned an IP address from this IP address range.

Supported size is between /16 and /27 bits

- Dataplane V2 uses eBPF to provide network security, visibility and scalability. This is the Recommended option and will be enabled by default in a future release.

- HTTP Load Balancing add-on is required to use the Google Cloud Load Balancer with Kubernetes Ingress.

- Enabling intranode visibility makes your intranode Pod-to-Pod traffic visible to the GCP networking fabric.

- Load balancer Subsetting: Internal load balancers will use a subset of nodes as backends to improve cluster scalability.

The gcloud command used to create the cluster was:

| gcloud beta container clusters create gke-us-east4-cluster-1 \ | |

| –zone "us-east4-a" \ | |

| –enable-private-nodes \ | |

| –enable-private-endpoint \ | |

| –master-ipv4-cidr "192.168.254.0/28" \ | |

| –enable-ip-alias \ | |

| –network "gcp-spoke100-us-east4" \ | |

| –subnetwork "gcp-spoke100-us-east4-nodes" \ | |

| –cluster-secondary-range-name "gcp-spoke100-us-east4-pod" \ | |

| –services-secondary-range-name "gcp-spoke100-us-east4-services" \ | |

| –enable-master-authorized-networks \ | |

| –master-authorized-networks "172.24.130.0/23" |

Do not forget to configure the Control plane authorized networks

Aviatrix by default creates routes for the primary network but not for secondary ip ranges:

For that reason we will need to customize the gateways route table. Using the drop down menu option “Customize Advertised Spoke VPC CIDRs”:

The list of CIDRs, separated by comma if more than one, are the only CIDRs advertised. In our case we need to input the secondary ranges (pod subnet, services subnet, and control plane), besides any other existing subnet.

I’m going to examine the route and gateway tables from another spoke for the correctness of our configuration:

- vpc route table:

- gateway route table:

The transit gateway spoke peer list is also a good place to check:

If you plan to manage the cluster from a point outside the vpc, you have to change the peering configuration so that the custom routes from the vpc are exported to the control-plane peered network. GKE creates a peering connection between the node vpc and the control plane:

If you plan to manage the cluster from a point outside the vpc, you have to change the peering configuration so that the custom routes from the vpc are exported to the control-plane peered network:

The control-plane network will learn all the routes from the vpc:

and it exports the control-plane:

Testing

Connecting to the cluster:

- execute the gcloud command in your management station. the command you can get going to GKE -> clusters and clicking on the 3 dots:

If you do not have it installed, install kubectl in your management station.

Testing access to the control plane:

I’m going to create a container running nginx:

| apiVersion: apps/v1 | |

| kind: Deployment | |

| metadata: | |

| name: nginx | |

| spec: | |

| selector: | |

| matchLabels: | |

| app: nginx | |

| replicas: 2 | |

| template: | |

| metadata: | |

| labels: | |

| app: nginx | |

| spec: | |

| containers: | |

| – name: nginx | |

| image: nginx | |

| ports: | |

| – containerPort: 80 |

After applying we have:

Now we need to publish/expose it creating a service:

| apiVersion: v1 | |

| kind: Service | |

| metadata: | |

| name: nginx | |

| spec: | |

| selector: | |

| app: nginx | |

| ports: | |

| – port: 80 | |

| targetPort: 80 | |

| type: LoadBalancer |

After applying we have:

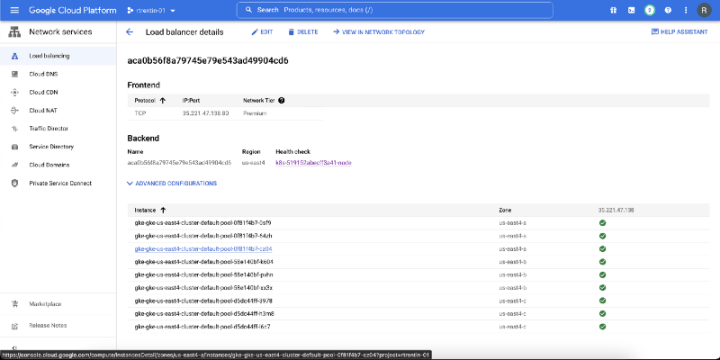

Examining the load balancer:

Finally:

References

https://cloud.google.com/kubernetes-engine/docs/concepts/kubernetes-engine-overview

https://cloud.google.com/kubernetes-engine/docs/best-practices/networking

https://cloud.google.com/vpc/docs/subnets#valid-ranges

https://cloud.google.com/sdk/gcloud/reference/container/clusters/create

https://cloud.google.com/kubernetes-engine/docs/how-to/flexible-pod-cidr

2 thoughts on “Running a GKE on top of an Aviatrix Secure Cloud Network — Part 1”