Aviatrix Transit FireNet allows the deployment of 3rd party firewalls onto the Aviatrix transit architecture.

Transit FireNet works the same way as the Firewall Network where traffic in and out of the specified Spoke is forwarded to the firewall instances for inspection or policy application.

FireNet Design

GCP virtual private cloud (VPC) is a logically segmented global network within GCP that allows connected resources to communicate with each other. VPC networks contain one or more private IP subnets, each assigned to a GCP region. A subnet lives in only one region, but all subnets within a VPC network are reachable to the connected resources regardless of their location within GCP or their project membership.

Communication happens at Layer 3 because GCP forwards traffic by using host routes, even when the instances are part of a subnet that has a smaller subnet mask, such as /24. The reduced mask implies that all instances within the VPC network must communicate via a router. However, the intra-VPC traffic never transits the router. Instead, the router responds with a proxy address resolution protocol that tells the device how to communicate directly with the destination device.

By default, all instances connected to the VPC network communicate directly, even when they are part of different subnets. When you add a subnet, GCP automatically generates routes that facilitate communication within the VPC network as well as to the internet. These routes are known as system-generated routes.

The effect of GCP’s traffic forwarding capabilities is that a firewall can’t be inserted in the middle of intra-VPC traffic. For that reason the FireNet design has four vpcs: egress, management, LAN vpc, and the transit FireNet VPC.

Load balancers are used to provide high-availability and scalability to the design. GCP offers several load balancers that distribute traffic to a set of instances based on the type of traffic but in this design we will focus at the network load balancer.

The load balancer comes in two types: internal and network. The difference between the two types is the source of the traffic. The internal load balancer only supports traffic originating from within the VPC network or coming across a VPN terminating within GCP. The network load balancer is reachable from any device on the internet.

Network load balancers are not members of VPC networks. Instead, like public IP addresses attached directly to an instance, GCP translates inbound traffic to the public frontend IP address directly to the private IP address of the instance. Because the public network load balancer is not attached to a specific VPC network, any instance in the project that is in the region can be part of the target pool (regardless of the VPC network) to which the backend instance is attached.

Traffic from the load balancer to your instances has a source IP address in the ranges of 130.211.0.0/22 and 35.191.0.0/16. The destination IP address is the private IP address of the backend instance.

Aviatrix deploys and configures the Internal Load Balancers for a Firenet.

Firewall Models

VM-Series firewalls on GCP are available in five primary models: VM-100, VM-300, VM-500, and VM-700.

Number of Interfaces

GCP provides virtual machines with two, four, or eight network interfaces. The number of network interfaces is relevant for GCP deployments because you might attach an interface to each VPC network in the deployment.

Sizes such as the n1-standard-2 might work if CPU, memory, and disk capacity were the only concern, but they are limited by having only two network interfaces. Because VM-Series firewalls reserve an interface for management functionality, two interface virtual machines are not a viable option. Four interface virtual machines meet the minimum requirement of a management, public, and private interface.

You cannot add additional interfaces to a GCP compute engine virtual machine instance after deployment.

Interface Swap

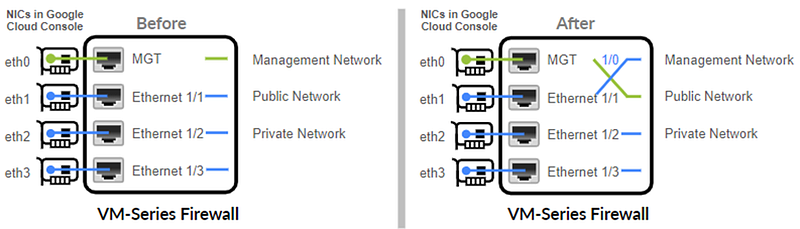

By default, the virtual network interface eth0 maps to the MGT interface on the firewall and eth1 maps to ethernet 1/1 on the firewall. Because the NLB can send traffic only to the primary interface of the next hop load-balanced VM, the VM-Series firewall must be able to use the primary interface for dataplane traffic.

To allow the firewall to send and receive dataplane traffic on eth0 instead of eth1, you must swap the mapping of the virtual interfaces within the firewall such that eth0 maps to ethernet 1/1 and eth1 maps to the MGT interface on the firewall.

To swap the interfaces, you have the following options:

At creation:

In the Create Instance form, enter a key-value pair in the Metadata field, where mgmt-interface-swap is the key, and enable is the value or

- Create a bootstrap file the includes the mgmt-interface-swap operational command in the bootstrap configuration. In the Create Instance form, enter a key-value pair in the Metadata field to enable the bootstrap option.

From the VM-Series firewall: Log in to the firewall and issue the following command:

set system setting mgmt-interface-swap enable yes

If the firewall is deployed from Firewall Network Setup the interface swap is taking care of by Aviatrix.

Consumption Models

A PAYG license is a usage-based or pay-per-use license. You can purchase a PAYG license from the GCP Marketplace. Google bills hourly for the GCP PAYG licenses. PAYG licenses are available in the following bundles:

- Bundle 1: Includes a VM-Series capacity license, intrusion prevention system (IPS), antivirus (AV), and malware prevention, and a premium support entitlement.

- Bundle 2: Includes a VM-Series capacity license, IPS, AV, and malware

prevention, GlobalProtect™, WildFire, DNS Security, PAN-DB URL Filtering licenses, and a premium support entitlement.

BYOL and VM-Series ELA: You purchase this license from a partner, reseller, or directly from Palo Alto Networks. VM-Seriesmfirewalls support all capacity, support, and subscription licenses in BYOL.

Summary

Onboard GCP

When you create a cloud account Aviatrix Controller for GCloud, you will be asked to upload a GCloud Project Credentials file. Below are the steps to download the credential file from the Google Developer Console. The steps are detailed below:

https://docs.aviatrix.com/HowTos/CreateGCloudAccount.html

Create VPCs and Subnets

I’m going to create the following VPCs and subnets:

- Management VPC

- Egress VPC

- Transit FireNet LAN

- Transit FireNet VPC

For testing purposes I’m going to create to spokes:

- spoke3 vpc

- spoke4 vpc

Looking at the gcp console VPC network:

Launch Aviatrix Gateways

From the Multi-Cloud transit menu, we launch a transit gateway:

We have to install the Transit Firenet Function at the deployment.

Enable Transit Firenet on Transit Gateway

Once the transit gws are deployed, we have to enable the firenet funcionality:

Enable Transit Firenet on Aviatrix Egress Transit Gateway

By default Firenet is configured to inspect inbound and east-west traffic but not outbound. To enable outbound (egress), we have to enable it using the Firewall Network Setup menu and clicking on Enable Transit Firenet on Aviatrix Egress Transit Gateway:

Firewall Configuration

The following APIs should be enabled:

- Compute Engine API

- Cloud Deployment Manager V2 API

- Cloud Runtime Configuration API

From the Firewall Network Setup, I launched the creation of a VM-Series:

VM-Series Configuration

After the launch is complete, the console displays the VM-Series instance with its public IP address of management interface and allows us to download the .pem file for SSH access to the instance. Select the instance and click on actions. A drop-down menu will appear to download the key:

Change the pem file permission restricting access only to the owner.

Change Password

SSH to the instance and change the password for the admin user:

configure

set mgt-config users admin password

commit

License

If the VM-Series is not a PAYG, license it going to Device -> Licenses -> License Management on the bottom right corner:

Interfaces Configuration

Uncheck “Automatically create default route pointing to default gateway provided by server”

- Ethernet1/1 is the external/unstrusted/WAN interface

- Ethernet1/2 is the internal/trusted/LAN interface

Health Check

PAN requires an interface management profile to reply to health probes from a NLB:

The management profile is attached to the interface under the advanced tab:

DNAT Policy for Health Check

Unlike a device-based or instance-based load balancer, Internal TCP/UDP Load Balancing doesn’t terminate connections from clients, instead traffic is sent to the backends directly. For that reason, a DNAT policy on the firewall to translate the destination of the health check packets to the firewall interface IP address is required. Go to Polices -> NAT -> Add NAT:

- Source Zone (LAN) -> Destination Zone (LAN)

- Destination Interface: ethernet1/2

- Source Address: 35.191.0.0/16 and 130.211.0.0/22 (GCP fixed ranges)

- Destination Address: ILB address (LAN Subnet .99 and .100)

- Destination Translation: PAN LAN nic2 IP address

Security Policy for Heath Check

Create a security policy granting GCP health check ranges access:

Static Routes for Health Check

This step is not required if the Vendor Firewall Integration is leveraged.

Create statics forcing PAN to reply back to the health check on the LAN (internal) interface:

After those configs (dont forget to commit), the ILBs should be healthy:

Firenet Policy

To inspect traffic from spokes 3 and 4, we have to add them to the Inspection Policy using the submenu Policy under the Firewall Network menu:

Vendor Firewall Integration

This step automatically configures the RFC 1918 and non-RFC 1918 routes between Aviatrix Gateway and Vendor’s firewall instance in this case Palo Alto Networks VM-Series. This can also be done manually through Cloud Portal and/or Vendor’s Management tool.

- Make sure the Palo Alto Networks management interface has ping enabled. Click device -> interfaces -> management

- Aviatrix Controller’s can ping PAN instances

- Create a new role profile and name it Aviatrix-API-Role: Go to Device -> Admin Roles -> XML/Rest API and select Report, Configuration, Operational Requests, and Commit:

- Add an admistrator for the role created above. Go to Device -> Administrators -> +Add:

- Configure Aviatrix:

Testing

Deploy GWs at Spokes

Using the Gateway menu, we deploy GWs for spokes 3 and 4:

Attach GWs to the Transit

Using the Multi-Cloud Transit Setup -> Attach/Detach we attach spokes 3 and 4 to the transit gw:

Although the Transit Gateway is now attached to the Spoke Gateways, it will not route traffic between Spoke Gateways.

Enable Transit

By default, spoke VPCs are in isolated mode where the Transit will not route traffic between them. To allow the Spoke VPCs to communicate with each other, you must enable Connected Transit:

East-West Traffic Flow

From spoke3 instance we try to ssh into spoke4 instance using the google cloud console:

A packet capture on the PAN shows the ssh attempts:

We can use FlighPath to check and troubleshoot if required:

FlighPath will check gcp vpc network firewall rules, spoke and transit gateway route tables:

References

https://docs.aviatrix.com/HowTos/CreateGCloudAccount.html

8 thoughts on “Deploying an Aviatrix FireNet on GCP with PANs”